Recently released Red hat openstack platform 16.2 introduced a tech preview integration feature called timemaster service. Time synchronization is one of the vital piece for vRAN infrastructure (explained towards the end of the blog) and timemaster provides HA between PTP (Precision time protocol) and chrony (NTP based) time services. Lets understand it.

Before we get into Timemaster service, we need to know a bit of PTP and Chrony time services.

PTP overview:

- PTP is a standardized RFC protocol developed with the aim to provide higher precision on network time synchronization. PTP introduces HW based timestamping on the packet leveraging the benefit of NIC timestamp capability.

- With hardware base timestamping support, the NIC has its own on-board clock, which is used to time stamp the received and transmitted PTP messages. It is this on-board clock that synchronizes to the master clock and then computer’s system clock is synchronized from the hardware clock time of the NIC.

- The root timing reference/top level is called the grandmaster. The grandmaster transmits synchronization information to the clocks residing on its network segment. The boundary clocks present on that segment then relay accurate time to the other segments to which they are also connected.

- When a clock has only one port, it can be master or slave, such a clock is called an ordinary clock (OC).

- A clock with multiple ports can be a master on one port and slave on another, such a clock is called a boundary clock (BC).

- The actual implementation of the protocol in the opensource software is known as linuxptp, a PTPv2 implementation according to the IEEE standard 1588 for Linux.

- The linuxptp package includes the ptp4l and phc2sys programs for clock synchronization. The ptp4l program implements the PTP boundary clock and ordinary clock.

- With ptp4l, By default, messages are sent to /var/log/messages. However, specifying the -m option enables logging to standard output which can be useful for debugging purposes.

- When there are multiple PTP domains available on the network, or fallback to NTP/chronyd is needed, the timemaster program can be used to synchronize the system clock to all available time sources.

- In addition to the standard ntp server, we need a ‘ptp grandmaster’ to provide a reference clock. Ideally, this would be a hardware appliance providing IEEE1588 frames from GNSS (Global navigation satellite system) data.

Chrony overview:

- Chrony is red hat’s implementation using NTP protocol. Current version of NTP is NTPv4.

- NTP is intended to synchronize all participating computers to within a few milliseconds of Coordinated Universal Time (UTC). NTP clients passively listen to time updates after an initial round-trip calibrating exchange

- chrony comes by default in Red Hat distributions and replaces ntpd

- chrony has a different implementation of the NTP protocol than ntpd, it can adjust the system clock more rapidly.

- chrony has two main components: chronyd, a daemon that is executed when the computer starts and runs in use space, And chronyc, a command line interface to the user for its configuration

Time for timemaster now.

Timemaster overview:

- timemaster is a program that uses ptp4l and phc2sys in combination with chronyd or ntpd to synchronize the system clock to NTP and PTP time sources. The PTP time is provided by phc2sys and ptp4l via SHM reference clocks to chronyd/ntpd, which can compare all time sources and use the best sources to synchronize the system clock.

- On start, timemaster reads a configuration file that specifies the NTP and PTP time sources, checks which network interfaces have and share a PTP hardware clock (PHC), generates configuration files for ptp4l and chronyd/ntpd, and start the ptp4l, phc2sys, chronyd/ntpd processes as needed. It writes configuration files for chronyd, ntpd, and ptp4l to /var/run/timemaster/ (A run time instances of ptp and chrnoyd services). Please refer “Process flow” section for more information on system clock setting.

- The timemaster configuration file (/etc/timemaster.conf) is divided into sections. Each section starts with a line containing its name enclosed in brackets and it follows with settings. Each setting is placed on a separate line, it contains the name of the option and the value separated by whitespace characters. Empty lines and lines starting with # are ignored.

Below is the sample configuration file of timemaster.conf

| [root@computesriov-0 heat-admin]# cat /etc/timemaster.conf # Configuration file for timemaster #[ntp_server ntp-server.local] #minpoll 4 #maxpoll 4 [ptp_domain 0] interfaces eno1 #ptp4l_setting network_transport l2 #delay 10e-6 [timemaster] ntp_program chronyd [chrony.conf] #include /etc/chrony.conf server clock.xyz.com iburst minpoll 6 maxpoll 10 [ntp.conf] includefile /etc/ntp.conf [ptp4l.conf] #includefile /etc/ptp4l.conf network_transport L2 [chronyd] path /usr/sbin/chronyd [ntpd] path /usr/sbin/ntpdoptions -u ntp:ntp -g [phc2sys] path /usr/sbin/phc2sys#options -w [ptp4l] path /usr/sbin/ptp4l #options -2 -i eno1 |

In this configuration timemaster configures and starts chronyd with one NTP server(clock.xyz.com) and one SHM reference clock (phc2sys). The NTP server is polled every 16 seconds. The PTP clock on the eno1 interface is synchronized by ptp4l in the PTP domain number 0 and phc2sys provides the PTP clock as a SHM reference clock to chronyd and chronyd set system clock.

Below is output when timemaster service runs successfully on the system.

| [root@computesriov-0 heat-admin]# systemctl status timemaster ● timemaster.service – Synchronize system clock to NTP and PTP time sources Loaded: loaded (/usr/lib/systemd/system/timemaster.service; enabled; vendor preset: disabled) Active: active (running) since Tue 2020-08-25 19:10:18 UTC; 2min 6s ago Main PID: 2573 (timemaster) Tasks: 6 (limit: 357097) Memory: 5.1M CGroup: /system.slice/timemaster.service ├─2573 /usr/sbin/timemaster -f /etc/timemaster.conf ├─2577 /usr/sbin/chronyd -n -f /var/run/timemaster/chrony.conf ├─2582 /usr/sbin/ptp4l -l 5 -f /var/run/timemaster/ptp4l.0.conf -H -i eno1 ├─2583 /usr/sbin/phc2sys -l 5 -a -r -R 1.00 -z /var/run/timemaster/ptp4l.0.socket -t [0:eno1] -n 0 -E ntpshm -M 0 ├─2587 /usr/sbin/ptp4l -l 5 -f /var/run/timemaster/ptp4l.1.conf -H -i eno2 └─2588 /usr/sbin/phc2sys -l 5 -a -r -R 1.00 -z /var/run/timemaster/ptp4l.1.socket -t [0:eno2] -n 0 -E ntpshm -M 1 Aug 25 19:11:53 computesriov-0 ptp4l[2587]: [152.562] [0:eno2] selected local clock e4434b.fffe.4a0c24 as best master |

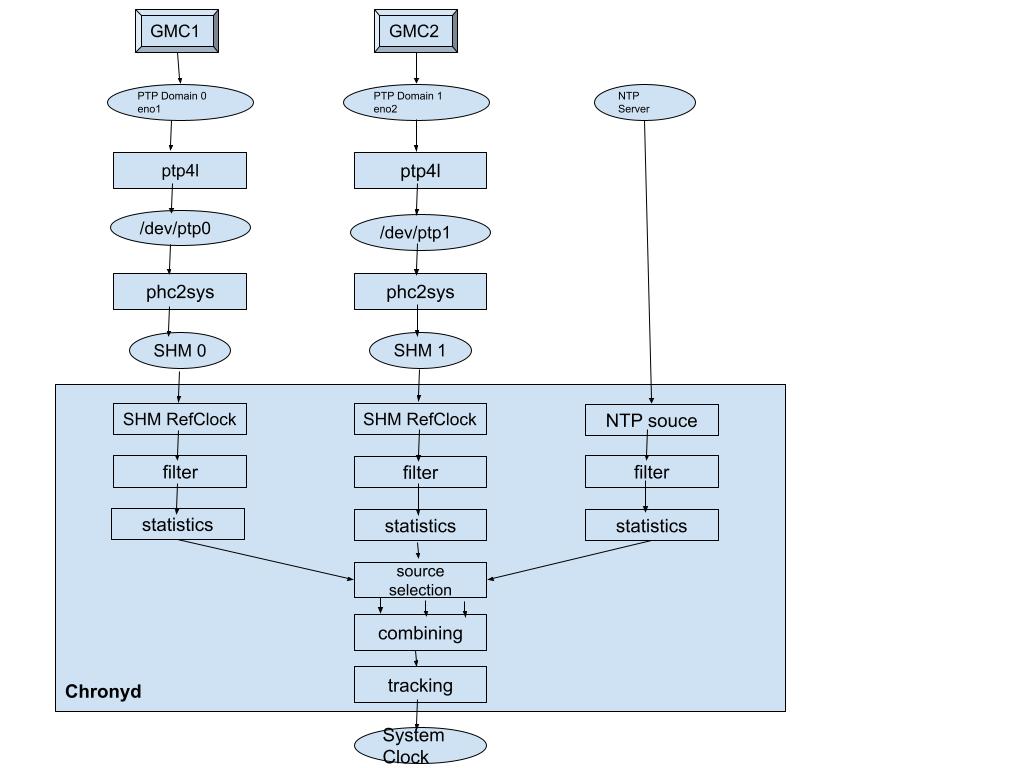

Lets try to understand what i stated above with a flow chart.

Process flow:

Let’s take a look at how nodes using timemaster service to get HA between different time sources.

Here, Chronyd will use time references provided by eno1, eno2 and NTP server. If eno1 becomes unstable/falseticker, chronyd moves to eno2, if eno2 too becomes unavailable, it moves to NTP server. If eno1,eno2 recover, chrony uses them again and reset system time.

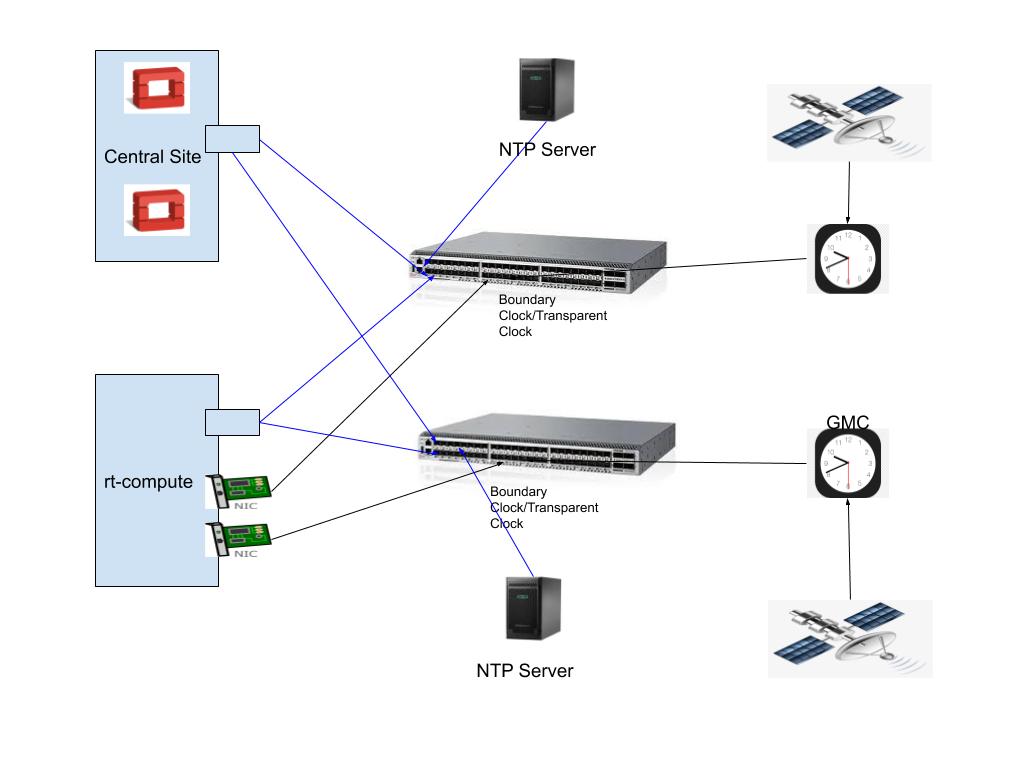

Below picture shows the sample field implementation with openstack.

- Grand master clock syncs with GNSS receiver

- It runs as a source for NTP and PTP protocols

- The NTP servers are used by Controller nodes where time precision of NTP is enough

- Compute nodes run PTP to synchronize its system time along with chronyd.

- Boundary clocks/Transparent clocks provide HA for network path as well.

Note: PTP grandmaster clock can act as NTP source as well.

Switch configurations:

The switches must be configured to allow the multicast ethernet frames. Switch should be configured either “e2e transparent mode” or “boundary clock mode”.

Transparent Clock/Boundary Clock switches are for accuracy and lower delay variations. Boundary Clock is preferred where the network is congested and scaled. PTPv2 header contains 8 bit “correctionField” which is used by the boundary clock to correct delay variations so the end clock gets high precision time. In Transparent clock switch, this field is not touched and thus leaves delay variation calculation by the end point.

In my deployment, I had a switch which supports only a transparent clock.

| show ptp global-information PTP Global Configuration:Transparent-clock-config : ENABLED Transparent-clock-status : ACTIVE |

NIC attached to nodes should have PTP support. You can check it using “ethtool -T”.

Alright, we know about the timemaster service now, lets see how we configure them in openstack overcloud nodes.

Openstack deployment & configuration:

Currently, OSP (Openstack) provides the below default service (Chronyd) for time synchronization in overcloud nodes.This is configured in roles_data.yaml.

– OS::TripleO::Services::Timesync

This service is added to all roles and configures “chrony” on overcloud nodes.

Timesync service in OSP uses Chrony and its parameter is already available in TripleO Heat template.

# NTP server configuration.

NtpServer: [‘clock.redhat.com’]

In order to use timemaster service, we need to remove Timesync and add TimeMaster in roles_data.yaml for the role we desire to have Timemaster service.

# – OS::TripleO::Services::Timesync

– OS::TripleO::Services::TimeMaster

We have to configure below 2 role specific tripleo heat parameters in network environment file.

ComputeSriovParameters:

# Example

PTPInterfaces: ‘0:eno1,1:eno2’

PTPMessageTransport: ‘L2’

With these, the compute node with timemaster service role would have timemaster service configured with provided values for interfaces and mode for transport.

Now, lets talk about vRAN and its association to what i explained till now.

vRAN use case:

The mobile networks need a good synchronization in the radio access network (RAN) between its cell towers and connected devices for handover from one cell to another without the user or connected device noticing any drop or interference in the connection performance. Also, cell sites require a synchronization back to a centralized primary reference time clock (PRTC) so that essentially all the RAN portions of the network are in synchronization with each other. Openstack is a cloud infrastructure where applications are virtualized and hosted. So, RAN becomes vRAN with Virtualized Baseband Units (vBBUs) running in the cloud.

However, RAN networks and its applications’s synchronization capabilities like frequency synchronization, phase synchronization etc should not be impacted while running them in the cloud. One of main time synchronization standard is the IEEE’s 1588-2008 and 1588-2019, commonly known as 1588v2 and 1588v2.1, specifications for a Precision Time Protocol (PTP) for packet-based networks in a range of applications, including mobile networks within the “Telecoms Profile” definitions of the spec. 1588v2 provides a mechanism for using specific timing packets to deliver frequency information, and by adding very accurate time stamping to the packets, it can also deliver time of day and accurate phase information.

Thus providing PTP time service at real-time overcloud compute nodes becomes absolute necessity if openstack cloud infra has to host such time sensitive networks. And timemaster service does that job at the nodes.

I hope this blog helped to understand timemaster service, the reason for its addition to openstack and its use case with vRAN.

For more information on this topic, you can refer openstack public documentation as well.